learning Scalaz

How many programming languages have been called Lisp in sheep’s clothing? Java brought in GC to familiar C++ like grammar. Although there have been other languages with GC, in 1996 it felt like a big deal because it promised to become a viable alternative to C++. Eventually, people got used to not having to manage memory by hand. JavaScript and Ruby both have been called Lisp in sheep’s clothing for their first-class functions and block syntax. The homoiconic nature of S-expression still makes Lisp-like languages interesting as it fits well to macros.

Recently languages are borrowing concepts from newer breed of functional languages. Type inference and pattern matching I am guessing goes back to ML. Eventually people will come to expect these features too. Given that Lisp came out in 1958 and ML in 1973, it seems to take decades for good ideas to catch on. For those cold decades, these languages were probably considered heretical or worse “not serious.”

I’m not saying Scalaz is going to be the next big thing. I don’t even know about it yet. But one thing for sure is that guys using it are serious about solving their problems. Or just as pedantic as the rest of the Scala community using pattern matching. Given that Haskell came out in 1990, the witch hunt may last a while, but I am going to keep an open mind.

Links

- Older learning Scalaz based on Scalaz 7.0

- learning-scalaz.pdf

day 0

I never set out to do a ”(you can) learn Scalaz in X days.” day 1 was written on Auguest 31, 2012 while Scalaz 7 was in milestone 7. Then day 2 was written the next day, and so on. It’s a web log of ”(me) learning Scalaz.” As such, it’s terse and minimal. Some of the days, I spent more time reading the book and trying code than writing the post.

Before we dive into the details, today I’m going to try a prequel to ease you in. Feel free to skip this part and come back later.

Intro to Scalaz

There have been several Scalaz intros, but the best I’ve seen is Scalaz talk by Nick Partridge given at Melbourne Scala Users Group on March 22, 2010:

Scalaz talk is up - http://bit.ly/c2eTVR Lots of code showing how/why the library exists

— Nick Partridge (@nkpart) March 28, 2010

I’m going to borrow some material from it.

Scalaz consists of three parts:

- New datatypes (

Validation,NonEmptyList, etc) - Extensions to standard classes (

OptionOps,ListOps, etc) - Implementation of every single general functions you need (ad-hoc polymorphism, traits + implicits)

What is polymorphism?

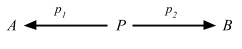

Parametric polymorphism

Nick says:

In this function

head, it takes a list ofA’s, and returns anA. And it doesn’t matter what theAis: It could beInts,Strings,Oranges,Cars, whatever. AnyAwould work, and the function is defined for everyAthat there can be.

scala> def head[A](xs: List[A]): A = xs(0)

head: [A](xs: List[A])A

scala> head(1 :: 2 :: Nil)

res0: Int = 1

scala> case class Car(make: String)

defined class Car

scala> head(Car("Civic") :: Car("CR-V") :: Nil)

res1: Car = Car(Civic)

Haskell wiki says:

Parametric polymorphism refers to when the type of a value contains one or more (unconstrained) type variables, so that the value may adopt any type that results from substituting those variables with concrete types.

Subtype polymorphism

Let’s think of a function plus that can add two values of type A:

scala> def plus[A](a1: A, a2: A): A = ???

plus: [A](a1: A, a2: A)A

Depending on the type A, we need to provide different definition for what it means to add them. One way to achieve this is through subtyping.

scala> trait Plus[A] {

def plus(a2: A): A

}

defined trait Plus

scala> def plus[A <: Plus[A]](a1: A, a2: A): A = a1.plus(a2)

plus: [A <: Plus[A]](a1: A, a2: A)A

We can at least provide different definitions of plus for A. But, this is not flexible since trait Plus needs to be mixed in at the time of defining the datatype. So it can’t work for Int and String.

Ad-hoc polymorphism

The third approach in Scala is to provide an implicit conversion or implicit parameters for the trait.

scala> trait Plus[A] {

def plus(a1: A, a2: A): A

}

defined trait Plus

scala> def plus[A: Plus](a1: A, a2: A): A = implicitly[Plus[A]].plus(a1, a2)

plus: [A](a1: A, a2: A)(implicit evidence$1: Plus[A])A

This is truely ad-hoc in the sense that

- we can provide separate function definitions for different types of

A - we can provide function definitions to types (like

Int) without access to its source code - the function definitions can be enabled or disabled in different scopes

The last point makes Scala’s ad-hoc polymorphism more powerful than that of Haskell. More on this topic can be found at Debasish Ghosh @debasishg’s Scala Implicits : Type Classes Here I Come.

Let’s look into plus function in more detail.

sum function

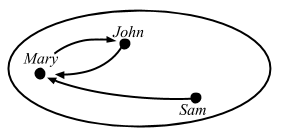

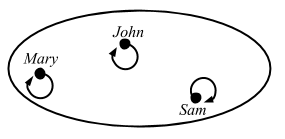

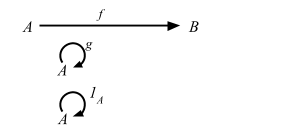

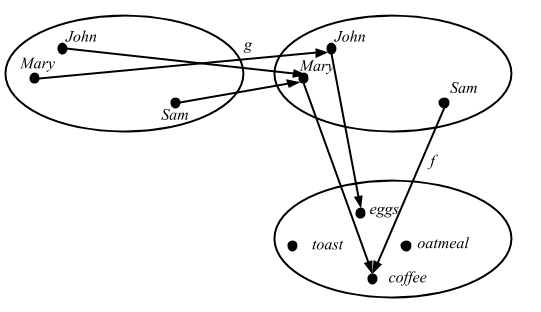

Nick demonstrates an example of ad-hoc polymorphism by gradually making sum function more general, starting from a simple function that adds up a list of Ints:

scala> def sum(xs: List[Int]): Int = xs.foldLeft(0) { _ + _ }

sum: (xs: List[Int])Int

scala> sum(List(1, 2, 3, 4))

res3: Int = 10

Monoid

If we try to generalize a little bit. I’m going to pull out a thing called

Monoid. … It’s a type for which there exists a functionmappend, which produces another type in the same set; and also a function that produces a zero.

scala> object IntMonoid {

def mappend(a: Int, b: Int): Int = a + b

def mzero: Int = 0

}

defined module IntMonoid

If we pull that in, it sort of generalizes what’s going on here:

scala> def sum(xs: List[Int]): Int = xs.foldLeft(IntMonoid.mzero)(IntMonoid.mappend)

sum: (xs: List[Int])Int

scala> sum(List(1, 2, 3, 4))

res5: Int = 10

Now we’ll abstract on the type about

Monoid, so we can defineMonoidfor any typeA. So nowIntMonoidis a monoid onInt:

scala> trait Monoid[A] {

def mappend(a1: A, a2: A): A

def mzero: A

}

defined trait Monoid

scala> object IntMonoid extends Monoid[Int] {

def mappend(a: Int, b: Int): Int = a + b

def mzero: Int = 0

}

defined module IntMonoid

What we can do is that sum take a List of Int and a monoid on Int to sum it:

scala> def sum(xs: List[Int], m: Monoid[Int]): Int = xs.foldLeft(m.mzero)(m.mappend)

sum: (xs: List[Int], m: Monoid[Int])Int

scala> sum(List(1, 2, 3, 4), IntMonoid)

res7: Int = 10

We are not using anything to do with

Inthere, so we can replace allIntwith a general type:

scala> def sum[A](xs: List[A], m: Monoid[A]): A = xs.foldLeft(m.mzero)(m.mappend)

sum: [A](xs: List[A], m: Monoid[A])A

scala> sum(List(1, 2, 3, 4), IntMonoid)

res8: Int = 10

The final change we have to take is to make the

Monoidimplicit so we don’t have to specify it each time.

scala> def sum[A](xs: List[A])(implicit m: Monoid[A]): A = xs.foldLeft(m.mzero)(m.mappend)

sum: [A](xs: List[A])(implicit m: Monoid[A])A

scala> implicit val intMonoid = IntMonoid

intMonoid: IntMonoid.type = IntMonoid$@3387dfac

scala> sum(List(1, 2, 3, 4))

res9: Int = 10

Nick didn’t do this, but the implicit parameter is often written as a context bound:

scala> def sum[A: Monoid](xs: List[A]): A = {

val m = implicitly[Monoid[A]]

xs.foldLeft(m.mzero)(m.mappend)

}

sum: [A](xs: List[A])(implicit evidence$1: Monoid[A])A

scala> sum(List(1, 2, 3, 4))

res10: Int = 10

Our

sumfunction is pretty general now, appending any monoid in a list. We can test that by writing anotherMonoidforString. I’m also going to package these up in an object calledMonoid. The reason for that is Scala’s implicit resolution rules: When it needs an implicit parameter of some type, it’ll look for anything in scope. It’ll include the companion object of the type that you’re looking for.

scala> :paste

// Entering paste mode (ctrl-D to finish)

trait Monoid[A] {

def mappend(a1: A, a2: A): A

def mzero: A

}

object Monoid {

implicit val IntMonoid: Monoid[Int] = new Monoid[Int] {

def mappend(a: Int, b: Int): Int = a + b

def mzero: Int = 0

}

implicit val StringMonoid: Monoid[String] = new Monoid[String] {

def mappend(a: String, b: String): String = a + b

def mzero: String = ""

}

}

def sum[A: Monoid](xs: List[A]): A = {

val m = implicitly[Monoid[A]]

xs.foldLeft(m.mzero)(m.mappend)

}

// Exiting paste mode, now interpreting.

defined trait Monoid

defined module Monoid

sum: [A](xs: List[A])(implicit evidence$1: Monoid[A])A

scala> sum(List("a", "b", "c"))

res12: String = abc

You can still provide different monoid directly to the function. We could provide an instance of monoid for

Intusing multiplications.

scala> val multiMonoid: Monoid[Int] = new Monoid[Int] {

def mappend(a: Int, b: Int): Int = a * b

def mzero: Int = 1

}

multiMonoid: Monoid[Int] = $anon$1@48655fb6

scala> sum(List(1, 2, 3, 4))(multiMonoid)

res14: Int = 24

FoldLeft

What we wanted was a function that generalized on

List. … So we want to generalize onfoldLeftoperation.

scala> object FoldLeftList {

def foldLeft[A, B](xs: List[A], b: B, f: (B, A) => B) = xs.foldLeft(b)(f)

}

defined module FoldLeftList

scala> def sum[A: Monoid](xs: List[A]): A = {

val m = implicitly[Monoid[A]]

FoldLeftList.foldLeft(xs, m.mzero, m.mappend)

}

sum: [A](xs: List[A])(implicit evidence$1: Monoid[A])A

scala> sum(List(1, 2, 3, 4))

res15: Int = 10

scala> sum(List("a", "b", "c"))

res16: String = abc

scala> sum(List(1, 2, 3, 4))(multiMonoid)

res17: Int = 24

Now we can apply the same abstraction to pull out

FoldLefttypeclass.

scala> :paste

// Entering paste mode (ctrl-D to finish)

trait FoldLeft[F[_]] {

def foldLeft[A, B](xs: F[A], b: B, f: (B, A) => B): B

}

object FoldLeft {

implicit val FoldLeftList: FoldLeft[List] = new FoldLeft[List] {

def foldLeft[A, B](xs: List[A], b: B, f: (B, A) => B) = xs.foldLeft(b)(f)

}

}

def sum[M[_]: FoldLeft, A: Monoid](xs: M[A]): A = {

val m = implicitly[Monoid[A]]

val fl = implicitly[FoldLeft[M]]

fl.foldLeft(xs, m.mzero, m.mappend)

}

// Exiting paste mode, now interpreting.

warning: there were 2 feature warnings; re-run with -feature for details

defined trait FoldLeft

defined module FoldLeft

sum: [M[_], A](xs: M[A])(implicit evidence$1: FoldLeft[M], implicit evidence$2: Monoid[A])A

scala> sum(List(1, 2, 3, 4))

res20: Int = 10

scala> sum(List("a", "b", "c"))

res21: String = abc

Both Int and List are now pulled out of sum.

Typeclasses in Scalaz

In the above example, the traits Monoid and FoldLeft correspond to Haskell’s typeclass. Scalaz provides many typeclasses.

All this is broken down into just the pieces you need. So, it’s a bit like ultimate ducktyping because you define in your function definition that this is what you need and nothing more.

Method injection (enrich my library)

If we were to write a function that sums two types using the

Monoid, we need to call it like this.

scala> def plus[A: Monoid](a: A, b: A): A = implicitly[Monoid[A]].mappend(a, b)

plus: [A](a: A, b: A)(implicit evidence$1: Monoid[A])A

scala> plus(3, 4)

res25: Int = 7

We would like to provide an operator. But we don’t want to enrich just one type, but enrich all types that has an instance for Monoid. Let me do this in Scalaz 7 style.

scala> trait MonoidOp[A] {

val F: Monoid[A]

val value: A

def |+|(a2: A) = F.mappend(value, a2)

}

defined trait MonoidOp

scala> implicit def toMonoidOp[A: Monoid](a: A): MonoidOp[A] = new MonoidOp[A] {

val F = implicitly[Monoid[A]]

val value = a

}

toMonoidOp: [A](a: A)(implicit evidence$1: Monoid[A])MonoidOp[A]

scala> 3 |+| 4

res26: Int = 7

scala> "a" |+| "b"

res28: String = ab

We were able to inject |+| to both Int and String with just one definition.

Standard type syntax

Using the same technique, Scalaz also provides method injections for standard library types like Option and Boolean:

scala> 1.some | 2

res0: Int = 1

scala> Some(1).getOrElse(2)

res1: Int = 1

scala> (1 > 10)? 1 | 2

res3: Int = 2

scala> if (1 > 10) 1 else 2

res4: Int = 2

I hope you could get some feel on where Scalaz is coming from.

day 1

typeclasses 101

Learn You a Haskell for Great Good says:

A typeclass is a sort of interface that defines some behavior. If a type is a part of a typeclass, that means that it supports and implements the behavior the typeclass describes.

Scalaz says:

It provides purely functional data structures to complement those from the Scala standard library. It defines a set of foundational type classes (e.g.

Functor,Monad) and corresponding instances for a large number of data structures.

Let’s see if I can learn Scalaz by learning me a Haskell.

sbt

Here’s build.sbt to test Scalaz 7.1.0:

scalaVersion := "2.11.2"

val scalazVersion = "7.1.0"

libraryDependencies ++= Seq(

"org.scalaz" %% "scalaz-core" % scalazVersion,

"org.scalaz" %% "scalaz-effect" % scalazVersion,

"org.scalaz" %% "scalaz-typelevel" % scalazVersion,

"org.scalaz" %% "scalaz-scalacheck-binding" % scalazVersion % "test"

)

scalacOptions += "-feature"

initialCommands in console := "import scalaz._, Scalaz._"

All you have to do now is open the REPL using sbt 0.13.0:

$ sbt console

...

[info] downloading http://repo1.maven.org/maven2/org/scalaz/scalaz-core_2.10/7.0.5/scalaz-core_2.10-7.0.5.jar ...

import scalaz._

import Scalaz._

Welcome to Scala version 2.10.3 (Java HotSpot(TM) 64-Bit Server VM, Java 1.6.0_51).

Type in expressions to have them evaluated.

Type :help for more information.

scala>

There’s also API docs generated for Scalaz 7.1.0.

Equal

LYAHFGG:

Eqis used for types that support equality testing. The functions its members implement are==and/=.

Scalaz equivalent for the Eq typeclass is called Equal:

scala> 1 === 1

res0: Boolean = true

scala> 1 === "foo"

<console>:14: error: could not find implicit value for parameter F0: scalaz.Equal[Object]

1 === "foo"

^

scala> 1 == "foo"

<console>:14: warning: comparing values of types Int and String using `==' will always yield false

1 == "foo"

^

res2: Boolean = false

scala> 1.some =/= 2.some

res3: Boolean = true

scala> 1 assert_=== 2

java.lang.RuntimeException: 1 ≠ 2

Instead of the standard ==, Equal enables ===, =/=, and assert_=== syntax by declaring equal method. The main difference is that === would fail compilation if you tried to compare Int and String.

Note: I originally had /== instead of =/=, but Eiríkr Åsheim pointed out to me:

@eed3si9n hey, was reading your scalaz tutorials. you should encourage people to use =/= and not /== since the latter has bad precedence.

— Eiríkr Åsheim (@d6) September 6, 2012

Normally comparison operators like != have lower higher precedence than &&, all letters, etc. Due to special precedence rule /== is recognized as an assignment operator because it ends with = and does not start with =, which drops to the bottom of the precedence:

scala> 1 != 2 && false

res4: Boolean = false

scala> 1 /== 2 && false

<console>:14: error: value && is not a member of Int

1 /== 2 && false

^

scala> 1 =/= 2 && false

res6: Boolean = false

Order

LYAHFGG:

Ordis for types that have an ordering.Ordcovers all the standard comparing functions such as>,<,>=and<=.

Scalaz equivalent for the Ord typeclass is Order:

scala> 1 > 2.0

res8: Boolean = false

scala> 1 gt 2.0

<console>:14: error: could not find implicit value for parameter F0: scalaz.Order[Any]

1 gt 2.0

^

scala> 1.0 ?|? 2.0

res10: scalaz.Ordering = LT

scala> 1.0 max 2.0

res11: Double = 2.0

Order enables ?|? syntax which returns Ordering: LT, GT, and EQ. It also enables lt, gt, lte, gte, min, and max operators by declaring order method. Similar to Equal, comparing Int and Doubl fails compilation.

Show

LYAHFGG:

Members of

Showcan be presented as strings.

Scalaz equivalent for the Show typeclass is Show:

scala> 3.show

res14: scalaz.Cord = 3

scala> 3.shows

res15: String = 3

scala> "hello".println

"hello"

Cord apparently is a purely functional data structure for potentially long Strings.

Read

LYAHFGG:

Readis sort of the opposite typeclass ofShow. Thereadfunction takes a string and returns a type which is a member ofRead.

I could not find Scalaz equivalent for this typeclass.

Enum

LYAHFGG:

Enummembers are sequentially ordered types — they can be enumerated. The main advantage of theEnumtypeclass is that we can use its types in list ranges. They also have defined successors and predecessors, which you can get with thesuccandpredfunctions.

Scalaz equivalent for the Enum typeclass is Enum:

scala> 'a' to 'e'

res30: scala.collection.immutable.NumericRange.Inclusive[Char] = NumericRange(a, b, c, d, e)

scala> 'a' |-> 'e'

res31: List[Char] = List(a, b, c, d, e)

scala> 3 |=> 5

res32: scalaz.EphemeralStream[Int] = scalaz.EphemeralStreamFunctions$$anon$4@6a61c7b6

scala> 'B'.succ

res33: Char = C

Instead of the standard to, Enum enables |-> that returns a List by declaring pred and succ method on top of Order typeclass. There are a bunch of other operations it enables like -+-, ---, from, fromStep, pred, predx, succ, succx, |-->, |->, |==>, and |=>. It seems like these are all about stepping forward or backward, and returning ranges.

Bounded

Boundedmembers have an upper and a lower bound.

Scalaz equivalent for Bounded seems to be Enum as well.

scala> implicitly[Enum[Char]].min

res43: Option[Char] = Some(?)

scala> implicitly[Enum[Char]].max

res44: Option[Char] = Some( )

scala> implicitly[Enum[Double]].max

res45: Option[Double] = Some(1.7976931348623157E308)

scala> implicitly[Enum[Int]].min

res46: Option[Int] = Some(-2147483648)

scala> implicitly[Enum[(Boolean, Int, Char)]].max

<console>:14: error: could not find implicit value for parameter e: scalaz.Enum[(Boolean, Int, Char)]

implicitly[Enum[(Boolean, Int, Char)]].max

^

Enum typeclass instance returns Option[T] for max values.

Num

Numis a numeric typeclass. Its members have the property of being able to act like numbers.

I did not find Scalaz equivalent for Num, Floating, and Integral.

typeclasses 102

I am now going to skip over to Chapter 8 Making Our Own Types and Typeclasses (Chapter 7 if you have the book) since the chapters in between are mostly about Haskell syntax.

A traffic light data type

data TrafficLight = Red | Yellow | Green

In Scala this would be:

scala> :paste

// Entering paste mode (ctrl-D to finish)

sealed trait TrafficLight

case object Red extends TrafficLight

case object Yellow extends TrafficLight

case object Green extends TrafficLight

Now let’s define an instance for Equal.

scala> implicit val TrafficLightEqual: Equal[TrafficLight] = Equal.equal(_ == _)

TrafficLightEqual: scalaz.Equal[TrafficLight] = scalaz.Equal$$anon$7@2457733b

Can I use it?

scala> Red === Yellow

<console>:18: error: could not find implicit value for parameter F0: scalaz.Equal[Product with Serializable with TrafficLight]

Red === Yellow

So apparently Equal[TrafficLight] doesn’t get picked up because Equal has nonvariant subtyping: Equal[F]. If I turned TrafficLight to a case class then Red and Yellow would have the same type, but then I lose the tight pattern matching from sealed #fail.

scala> :paste

// Entering paste mode (ctrl-D to finish)

case class TrafficLight(name: String)

val red = TrafficLight("red")

val yellow = TrafficLight("yellow")

val green = TrafficLight("green")

implicit val TrafficLightEqual: Equal[TrafficLight] = Equal.equal(_ == _)

red === yellow

// Exiting paste mode, now interpreting.

defined class TrafficLight

red: TrafficLight = TrafficLight(red)

yellow: TrafficLight = TrafficLight(yellow)

green: TrafficLight = TrafficLight(green)

TrafficLightEqual: scalaz.Equal[TrafficLight] = scalaz.Equal$$anon$7@42988fee

res3: Boolean = false

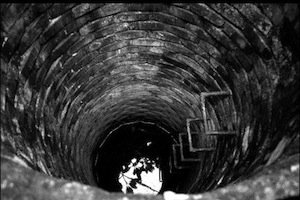

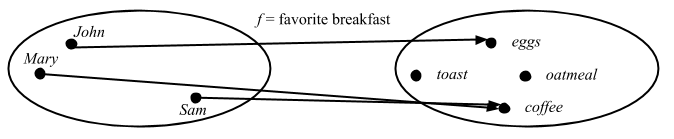

a Yes-No typeclass

Let’s see if we can make our own truthy value typeclass in the style of Scalaz. Except I am going to add my twist to it for the naming convention. Scalaz calls three or four different things using the name of the typeclass like Show, show, and show, which is a bit confusing.

I like to prefix the typeclass name with Can borrowing from CanBuildFrom, and name its method as verb + s, borrowing from sjson/sbinary. Since yesno doesn’t make much sense, let’s call ours truthy. Eventual goal is to get 1.truthy to return true. The downside is that the extra s gets appended if we want to use typeclass instances as functions like CanTruthy[Int].truthys(1).

scala> :paste

// Entering paste mode (ctrl-D to finish)

trait CanTruthy[A] { self =>

/** @return true, if `a` is truthy. */

def truthys(a: A): Boolean

}

object CanTruthy {

def apply[A](implicit ev: CanTruthy[A]): CanTruthy[A] = ev

def truthys[A](f: A => Boolean): CanTruthy[A] = new CanTruthy[A] {

def truthys(a: A): Boolean = f(a)

}

}

trait CanTruthyOps[A] {

def self: A

implicit def F: CanTruthy[A]

final def truthy: Boolean = F.truthys(self)

}

object ToCanIsTruthyOps {

implicit def toCanIsTruthyOps[A](v: A)(implicit ev: CanTruthy[A]) =

new CanTruthyOps[A] {

def self = v

implicit def F: CanTruthy[A] = ev

}

}

// Exiting paste mode, now interpreting.

defined trait CanTruthy

defined module CanTruthy

defined trait CanTruthyOps

defined module ToCanIsTruthyOps

scala> import ToCanIsTruthyOps._

import ToCanIsTruthyOps._

Here’s how we can define typeclass instances for Int:

scala> implicit val intCanTruthy: CanTruthy[Int] = CanTruthy.truthys({

case 0 => false

case _ => true

})

intCanTruthy: CanTruthy[Int] = CanTruthy$$anon$1@71780051

scala> 10.truthy

res6: Boolean = true

Next is for List[A]:

scala> implicit def listCanTruthy[A]: CanTruthy[List[A]] = CanTruthy.truthys({

case Nil => false

case _ => true

})

listCanTruthy: [A]=> CanTruthy[List[A]]

scala> List("foo").truthy

res7: Boolean = true

scala> Nil.truthy

<console>:23: error: could not find implicit value for parameter ev: CanTruthy[scala.collection.immutable.Nil.type]

Nil.truthy

It looks like we need to treat Nil specially because of the nonvariance.

scala> implicit val nilCanTruthy: CanTruthy[scala.collection.immutable.Nil.type] = CanTruthy.truthys(_ => false)

nilCanTruthy: CanTruthy[collection.immutable.Nil.type] = CanTruthy$$anon$1@1e5f0fd7

scala> Nil.truthy

res8: Boolean = false

And for Boolean using identity:

scala> implicit val booleanCanTruthy: CanTruthy[Boolean] = CanTruthy.truthys(identity)

booleanCanTruthy: CanTruthy[Boolean] = CanTruthy$$anon$1@334b4cb

scala> false.truthy

res11: Boolean = false

Using CanTruthy typeclass, let’s define truthyIf like LYAHFGG:

Now let’s make a function that mimics the

ifstatement, but that works withYesNovalues.

To delay the evaluation of the passed arguments, we can use pass-by-name:

scala> :paste

// Entering paste mode (ctrl-D to finish)

def truthyIf[A: CanTruthy, B, C](cond: A)(ifyes: => B)(ifno: => C) =

if (cond.truthy) ifyes

else ifno

// Exiting paste mode, now interpreting.

truthyIf: [A, B, C](cond: A)(ifyes: => B)(ifno: => C)(implicit evidence$1: CanTruthy[A])Any

Here’s how we can use it:

scala> truthyIf (Nil) {"YEAH!"} {"NO!"}

res12: Any = NO!

scala> truthyIf (2 :: 3 :: 4 :: Nil) {"YEAH!"} {"NO!"}

res13: Any = YEAH!

scala> truthyIf (true) {"YEAH!"} {"NO!"}

res14: Any = YEAH!

We’ll pick it from here later.

day 2

Yesterday we reviewed a few basic typeclasses from Scalaz like Equal by using Learn You a Haskell for Great Good as the guide. We also created our own CanTruthy typeclass.

Functor

LYAHFGG:

And now, we’re going to take a look at the

Functortypeclass, which is basically for things that can be mapped over.

Like the book let’s look how it’s implemented:

trait Functor[F[_]] { self =>

/** Lift `f` into `F` and apply to `F[A]`. */

def map[A, B](fa: F[A])(f: A => B): F[B]

...

}

Here are the injected operators it enables:

trait FunctorOps[F[_],A] extends Ops[F[A]] {

implicit def F: Functor[F]

////

import Leibniz.===

final def map[B](f: A => B): F[B] = F.map(self)(f)

...

}

So this defines map method, which accepts a function A => B and returns F[B]. We are quite familiar with map method for collections:

scala> List(1, 2, 3) map {_ + 1}

res15: List[Int] = List(2, 3, 4)

Scalaz defines Functor instances for Tuples.

scala> (1, 2, 3) map {_ + 1}

res28: (Int, Int, Int) = (1,2,4)

Note that the operation is only applied to the last value in the Tuple, (see scalaz group discussion).

Function as Functors

Scalaz also defines Functor instance for Function1.

scala> ((x: Int) => x + 1) map {_ * 7}

res30: Int => Int = <function1>

scala> res30(3)

res31: Int = 28

This is interesting. Basically map gives us a way to compose functions, except the order is in reverse from f compose g.

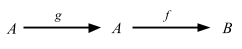

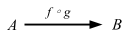

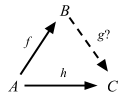

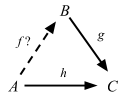

No wonder Scalaz provides ∘ as an alias of map. Another way of looking at Function1 is that it’s an infinite map from the domain to the range. Now let’s skip the input and output stuff and go to Functors, Applicative Functors and Monoids.

How are functions functors? …

What does the type

fmap :: (a -> b) -> (r -> a) -> (r -> b)for this instance tell us? Well, we see that it takes a function fromatoband a function fromrtoaand returns a function fromrtob. Does this remind you of anything? Yes! Function composition!

Oh man, LYAHFGG came to the same conclusion as I did about the function composition. But wait..

ghci> fmap (*3) (+100) 1

303

ghci> (*3) . (+100) $ 1

303

In Haskell, the fmap seems to be working as the same order as f compose g. Let’s check in Scala using the same numbers:

scala> (((_: Int) * 3) map {_ + 100}) (1)

res40: Int = 103

Something is not right. Let’s compare the declaration of fmap and Scalaz’s map operator:

fmap :: (a -> b) -> f a -> f b

and here’s Scalaz:

final def map[B](f: A => B): F[B] = F.map(self)(f)

So the order is completely different. Since map here’s an injected method of F[A], the data structure to be mapped over comes first, then the function comes next. Let’s see List:

ghci> fmap (*3) [1, 2, 3]

[3,6,9]

and

scala> List(1, 2, 3) map {3*}

res41: List[Int] = List(3, 6, 9)

The order is reversed here too.

[We can think of

fmapas] a function that takes a function and returns a new function that’s just like the old one, only it takes a functor as a parameter and returns a functor as the result. It takes ana -> bfunction and returns a functionf a -> f b. This is called lifting a function.

ghci> :t fmap (*2)

fmap (*2) :: (Num a, Functor f) => f a -> f a

ghci> :t fmap (replicate 3)

fmap (replicate 3) :: (Functor f) => f a -> f [a]

Are we going to miss out on this lifting goodness? There are several neat functions under Functor typeclass. One of them is called lift:

scala> Functor[List].lift {(_: Int) * 2}

res45: List[Int] => List[Int] = <function1>

scala> res45(List(3))

res47: List[Int] = List(6)

Functor also enables some operators that overrides the values in the data structure like >|, as, fpair, strengthL, strengthR, and void:

scala> List(1, 2, 3) >| "x"

res47: List[String] = List(x, x, x)

scala> List(1, 2, 3) as "x"

res48: List[String] = List(x, x, x)

scala> List(1, 2, 3).fpair

res49: List[(Int, Int)] = List((1,1), (2,2), (3,3))

scala> List(1, 2, 3).strengthL("x")

res50: List[(String, Int)] = List((x,1), (x,2), (x,3))

scala> List(1, 2, 3).strengthR("x")

res51: List[(Int, String)] = List((1,x), (2,x), (3,x))

scala> List(1, 2, 3).void

res52: List[Unit] = List((), (), ())

Applicative

LYAHFGG:

So far, when we were mapping functions over functors, we usually mapped functions that take only one parameter. But what happens when we map a function like

*, which takes two parameters, over a functor?

scala> List(1, 2, 3, 4) map {(_: Int) * (_:Int)}

<console>:14: error: type mismatch;

found : (Int, Int) => Int

required: Int => ?

List(1, 2, 3, 4) map {(_: Int) * (_:Int)}

^

Oops. We have to curry this:

scala> List(1, 2, 3, 4) map {(_: Int) * (_:Int)}.curried

res11: List[Int => Int] = List(<function1>, <function1>, <function1>, <function1>)

scala> res11 map {_(9)}

res12: List[Int] = List(9, 18, 27, 36)

LYAHFGG:

Meet the

Applicativetypeclass. It lies in theControl.Applicativemodule and it defines two methods,pureand<*>.

Let’s see the contract for Scalaz’s Applicative:

trait Applicative[F[_]] extends Apply[F] { self =>

def point[A](a: => A): F[A]

/** alias for `point` */

def pure[A](a: => A): F[A] = point(a)

...

}

So Applicative extends another typeclass Apply, and introduces point and its alias pure.

LYAHFGG:

pureshould take a value of any type and return an applicative value with that value inside it. … A better way of thinking aboutpurewould be to say that it takes a value and puts it in some sort of default (or pure) context—a minimal context that still yields that value.

Scalaz likes the name point instead of pure, and it seems like it’s basically a constructor that takes value A and returns F[A]. It doesn’t introduce an operator, but it introduces point method and its symbolic alias η to all data types.

scala> 1.point[List]

res14: List[Int] = List(1)

scala> 1.point[Option]

res15: Option[Int] = Some(1)

scala> 1.point[Option] map {_ + 2}

res16: Option[Int] = Some(3)

scala> 1.point[List] map {_ + 2}

res17: List[Int] = List(3)

I can’t really express it in words yet, but there’s something cool about the fact that constructor is abstracted out.

Apply

LYAHFGG:

You can think of

<*>as a sort of a beefed-upfmap. Whereasfmaptakes a function and a functor and applies the function inside the functor value,<*>takes a functor that has a function in it and another functor and extracts that function from the first functor and then maps it over the second one.

trait Apply[F[_]] extends Functor[F] { self =>

def ap[A,B](fa: => F[A])(f: => F[A => B]): F[B]

}

Using ap, Apply enables <*>, *>, and <* operator.

scala> 9.some <*> {(_: Int) + 3}.some

res20: Option[Int] = Some(12)

As expected.

*> and <* are variations that returns only the rhs or lhs.

scala> 1.some <* 2.some

res35: Option[Int] = Some(1)

scala> none <* 2.some

res36: Option[Nothing] = None

scala> 1.some *> 2.some

res38: Option[Int] = Some(2)

scala> none *> 2.some

res39: Option[Int] = None

Option as Apply

We can use <*>:

scala> 9.some <*> {(_: Int) + 3}.some

res57: Option[Int] = Some(12)

scala> 3.some <*> { 9.some <*> {(_: Int) + (_: Int)}.curried.some }

res58: Option[Int] = Some(12)

Applicative Style

Another thing I found in 7.0.0-M3 is a new notation that extracts values from containers and apply them to a single function:

scala> ^(3.some, 5.some) {_ + _}

res59: Option[Int] = Some(8)

scala> ^(3.some, none[Int]) {_ + _}

res60: Option[Int] = None

This is actually useful because for one-function case, we no longer need to put it into the container. I am guessing that this is why Scalaz 7 does not introduce any operator from Applicative itself. Whatever the case, it seems like we no longer need Pointed or <$>.

The new ^(f1, f2) {...} style is not without the problem though. It doesn’t seem to handle Applicatives that takes two type parameters like Function1, Writer, and Validation. There’s another way called Applicative Builder, which apparently was the way it worked in Scalaz 6, got deprecated in M3, but will be vindicated again because of ^(f1, f2) {...}’s issues.

Here’s how it looks:

scala> (3.some |@| 5.some) {_ + _}

res18: Option[Int] = Some(8)

We will use |@| style for now.

Lists as Apply

LYAHFGG:

Lists (actually the list type constructor,

[]) are applicative functors. What a surprise!

Let’s see if we can use <*> and |@|:

scala> List(1, 2, 3) <*> List((_: Int) * 0, (_: Int) + 100, (x: Int) => x * x)

res61: List[Int] = List(0, 0, 0, 101, 102, 103, 1, 4, 9)

scala> List(3, 4) <*> { List(1, 2) <*> List({(_: Int) + (_: Int)}.curried, {(_: Int) * (_: Int)}.curried) }

res62: List[Int] = List(4, 5, 5, 6, 3, 4, 6, 8)

scala> (List("ha", "heh", "hmm") |@| List("?", "!", ".")) {_ + _}

res63: List[String] = List(ha?, ha!, ha., heh?, heh!, heh., hmm?, hmm!, hmm.)

Zip Lists

LYAHFGG:

However,

[(+3),(*2)] <*> [1,2]could also work in such a way that the first function in the left list gets applied to the first value in the right one, the second function gets applied to the second value, and so on. That would result in a list with two values, namely[4,4]. You could look at it as[1 + 3, 2 * 2].

This can be done in Scalaz, but not easily.

scala> streamZipApplicative.ap(Tags.Zip(Stream(1, 2))) (Tags.Zip(Stream({(_: Int) + 3}, {(_: Int) * 2})))

res32: scala.collection.immutable.Stream[Int] with Object{type Tag = scalaz.Tags.Zip} = Stream(4, ?)

scala> res32.toList

res33: List[Int] = List(4, 4)

We’ll see more examples of tagged type tomorrow.

Useful functions for Applicatives

LYAHFGG:

Control.Applicativedefines a function that’s calledliftA2, which has a type of

liftA2 :: (Applicative f) => (a -> b -> c) -> f a -> f b -> f c .

There’s Apply[F].lift2:

scala> Apply[Option].lift2((_: Int) :: (_: List[Int]))

res66: (Option[Int], Option[List[Int]]) => Option[List[Int]] = <function2>

scala> res66(3.some, List(4).some)

res67: Option[List[Int]] = Some(List(3, 4))

LYAHFGG:

Let’s try implementing a function that takes a list of applicatives and returns an applicative that has a list as its result value. We’ll call it

sequenceA.

sequenceA :: (Applicative f) => [f a] -> f [a]

sequenceA [] = pure []

sequenceA (x:xs) = (:) <$> x <*> sequenceA xs

Let’s try implementing this in Scalaz!

scala> def sequenceA[F[_]: Applicative, A](list: List[F[A]]): F[List[A]] = list match {

case Nil => (Nil: List[A]).point[F]

case x :: xs => (x |@| sequenceA(xs)) {_ :: _}

}

sequenceA: [F[_], A](list: List[F[A]])(implicit evidence$1: scalaz.Applicative[F])F[List[A]]

Let’s test it:

scala> sequenceA(List(1.some, 2.some))

res82: Option[List[Int]] = Some(List(1, 2))

scala> sequenceA(List(3.some, none, 1.some))

res85: Option[List[Int]] = None

scala> sequenceA(List(List(1, 2, 3), List(4, 5, 6)))

res86: List[List[Int]] = List(List(1, 4), List(1, 5), List(1, 6), List(2, 4), List(2, 5), List(2, 6), List(3, 4), List(3, 5), List(3, 6))

We got the right answers. What’s interesting here is that we did end up needing Pointed after all, and sequenceA is generic in typeclassy way.

For Function1 with Int fixed example, we have to unfortunately invoke a dark magic.

scala> type Function1Int[A] = ({type l[A]=Function1[Int, A]})#l[A]

defined type alias Function1Int

scala> sequenceA(List((_: Int) + 3, (_: Int) + 2, (_: Int) + 1): List[Function1Int[Int]])

res1: Int => List[Int] = <function1>

scala> res1(3)

res2: List[Int] = List(6, 5, 4)

It took us a while, but I am glad we got this far. We’ll pick it up from here later.

day 3

Yesterday we started with Functor, which adds map operator, and ended with polymorphic sequenceA function that uses Pointed[F].point and Applicative ^(f1, f2) {_ :: _} syntax.

Kinds and some type-foo

One section I should’ve covered yesterday from Making Our Own Types and Typeclasses but didn’t is about kinds and types. I thought it wouldn’t matter much to understand Scalaz, but it does, so we need to have the talk.

Learn You a Haskell For Great Good says:

Types are little labels that values carry so that we can reason about the values. But types have their own little labels, called kinds. A kind is more or less the type of a type. … What are kinds and what are they good for? Well, let’s examine the kind of a type by using the :k command in GHCI.

I did not find :k command for Scala REPL in Scala 2.10, so I wrote one: kind.scala. With George Leontiev (@folone), who sent in scala/scala#2340, and others’ help :kind command is now part of Scala 2.11. Let’s try using it:

scala> :k Int

scala.Int's kind is A

scala> :k -v Int

scala.Int's kind is A

*

This is a proper type.

scala> :k -v Option

scala.Option's kind is F[+A]

* -(+)-> *

This is a type constructor: a 1st-order-kinded type.

scala> :k -v Either

scala.util.Either's kind is F[+A1,+A2]

* -(+)-> * -(+)-> *

This is a type constructor: a 1st-order-kinded type.

scala> :k -v Equal

scalaz.Equal's kind is F[A]

* -> *

This is a type constructor: a 1st-order-kinded type.

scala> :k -v Functor

scalaz.Functor's kind is X[F[A]]

(* -> *) -> *

This is a type constructor that takes type constructor(s): a higher-kinded type.

From the top. Int and every other types that you can make a value out of is called a proper type and denoted with a symbol * (read “type”). This is analogous to value 1 at value-level. Using Scala’s type variable notation this could be written as A.

A first-order value, or a value constructor like (_: Int) + 3, is normally called a function. Similarly, a first-order-kinded type is a type that accepts other types to create a proper type. This is normally called a type constructor. Option, Either, and Equal are all first-order-kinded. To denote that these accept other types, we use curried notation like * -> * and * -> * -> *. Note, Option[Int] is *; Option is * -> *. Using Scala’s type variable notation they could be written as F[+A] and F[+A1,+A2].

A higher-order value like (f: Int => Int, list: List[Int]) => list map {f}, a function that accepts other functions is normally called higher-order function. Similarly, a higher-kinded type is a type constructor that accepts other type constructors. It probably should be called a higher-kinded type constructor but the name is not used. These are denoted as (* -> *) -> *. Using Scala’s type variable notation this could be written as X[F[A]].

In case of Scalaz 7.1, Equal and others have the kind F[A] while Functor and all its derivatives have the kind X[F[A]].

Scala encodes (or complects) the notion of type class using type constructor, and the terminology tend get jumbled up. For example, the data structure List forms a functor, in the sense that an instance Functor[List] can be derived for List. Since there should be only one instance for List, we can say that List is a functor. See the following discussion for more on “is-a”:

In FP, "is-a" means "an instance can be derived from." @jimduey #CPL14 It's a provable relationship, not reliant on LSP.

— Jessica Kerr (@jessitron) February 25, 2014Since List is F[+A], it’s easy to remember that F relates to a functor. Except, the typeclass definition Functor needs to wrap F[A] around, so its kind is X[F[A]]. To add to the confusion, the fact that Scala can treat type constructor as a first class variable was novel enough, that the compiler calls first-order kinded type as “higher-kinded type”:

scala> trait Test {

type F[_]

}

<console>:14: warning: higher-kinded type should be enabled

by making the implicit value scala.language.higherKinds visible.

This can be achieved by adding the import clause 'import scala.language.higherKinds'

or by setting the compiler option -language:higherKinds.

See the Scala docs for value scala.language.higherKinds for a discussion

why the feature should be explicitly enabled.

type F[_]

^

You normally don’t have to worry about this if you are using injected operators like:

scala> List(1, 2, 3).shows

res11: String = [1,2,3]

But if you want to use Show[A].shows, you have to know it’s Show[List[Int]], not Show[List]. Similarly, if you want to lift a function, you need to know that it’s Functor[F] (F is for Functor):

scala> Functor[List[Int]].lift((_: Int) + 2)

<console>:14: error: List[Int] takes no type parameters, expected: one

Functor[List[Int]].lift((_: Int) + 2)

^

scala> Functor[List].lift((_: Int) + 2)

res13: List[Int] => List[Int] = <function1>

In the cheat sheet I started I originally had type parameters for Equal written as Equal[F], which is the same as Scalaz 7’s source code. Adam Rosien pointed out to me that it should be Equal[A].

@eed3si9n love the scalaz cheat sheet start, but using the type param F usually means Functor, what about A instead?

— Adam Rosien (@arosien) September 1, 2012

Now it makes sense why!

Tagged type

If you have the book Learn You a Haskell for Great Good you get to start a new chapter: Monoids. For the website, it’s still Functors, Applicative Functors and Monoids.

LYAHFGG:

The newtype keyword in Haskell is made exactly for these cases when we want to just take one type and wrap it in something to present it as another type.

This is a language-level feature in Haskell, so one would think we can’t port it over to Scala.

About an year ago (September 2011) Miles Sabin (@milessabin) wrote a gist and called it Tagged and Jason Zaugg (@retronym) added @@ type alias.

type Tagged[U] = { type Tag = U }

type @@[T, U] = T with Tagged[U]

Eric Torreborre (@etorreborre) wrote Practical uses for Unboxed Tagged Types and Tim Perrett wrote Unboxed new types within Scalaz7 if you want to read up on it.

Suppose we want a way to express mass using kilogram, because kg is the international standard of unit. Normally we would pass in Double and call it a day, but we can’t distinguish that from other Double values. Can we use case class for this?

case class KiloGram(value: Double)

Although it does adds type safety, it’s not fun to use because we have to call x.value every time we need to extract the value out of it. Tagged type to the rescue.

scala> sealed trait KiloGram

defined trait KiloGram

scala> def KiloGram[A](a: A): A @@ KiloGram = Tag[A, KiloGram](a)

KiloGram: [A](a: A)scalaz.@@[A,KiloGram]

scala> val mass = KiloGram(20.0)

mass: scalaz.@@[Double,KiloGram] = 20.0

scala> 2 * Tag.unwrap(mass) // this doesn't work on REPL

res2: Double = 40.0

scala> 2 * Tag.unwrap(mass)

<console>:17: error: wrong number of type parameters for method unwrap$mDc$sp: [T](a: Object{type Tag = T; type Self = Double})Double

2 * Tag.unwrap(mass)

^

scala> 2 * scalaz.Tag.unsubst[Double, Id, KiloGram](mass)

res2: Double = 40.0

Note: As of scalaz 7.1 we need to explicitly unwrap tags. Previously we could just do 2 * mass. Due to a problem on REPL

SI-8871, Tag.unwrap doesn’t work, so I had to use Tag.unsubst.

Just to be clear, A @@ KiloGram is an infix notation of scalaz.@@[A, KiloGram]. We can now define a function that calculates relativistic energy.

scala> sealed trait JoulePerKiloGram

defined trait JoulePerKiloGram

scala> def JoulePerKiloGram[A](a: A): A @@ JoulePerKiloGram = Tag[A, JoulePerKiloGram](a)

JoulePerKiloGram: [A](a: A)scalaz.@@[A,JoulePerKiloGram]

scala> def energyR(m: Double @@ KiloGram): Double @@ JoulePerKiloGram =

JoulePerKiloGram(299792458.0 * 299792458.0 * Tag.unsubst[Double, Id, KiloGram](m))

energyR: (m: scalaz.@@[Double,KiloGram])scalaz.@@[Double,JoulePerKiloGram]

scala> energyR(mass)

res4: scalaz.@@[Double,JoulePerKiloGram] = 1.79751035747363533E18

scala> energyR(10.0)

<console>:18: error: type mismatch;

found : Double(10.0)

required: scalaz.@@[Double,KiloGram]

(which expands to) AnyRef{type Tag = KiloGram; type Self = Double}

energyR(10.0)

^

As you can see, passing in plain Double to energyR fails at compile-time. This sounds exactly like newtype except it’s even better because we can define Int @@ KiloGram if we want.

About those Monoids

LYAHFGG:

It seems that both

*together with1and++along with[]share some common properties: - The function takes two parameters. - The parameters and the returned value have the same type. - There exists such a value that doesn’t change other values when used with the binary function.

Let’s check it out in Scala:

scala> 4 * 1

res16: Int = 4

scala> 1 * 9

res17: Int = 9

scala> List(1, 2, 3) ++ Nil

res18: List[Int] = List(1, 2, 3)

scala> Nil ++ List(0.5, 2.5)

res19: List[Double] = List(0.5, 2.5)

Looks right.

LYAHFGG:

It doesn’t matter if we do

(3 * 4) * 5or3 * (4 * 5). Either way, the result is60. The same goes for++. … We call this property associativity.*is associative, and so is++, but-, for example, is not.

Let’s check this too:

scala> (3 * 2) * (8 * 5) assert_=== 3 * (2 * (8 * 5))

scala> List("la") ++ (List("di") ++ List("da")) assert_=== (List("la") ++ List("di")) ++ List("da")

No error means, they are equal. Apparently this is what monoid is.

Monoid

LYAHFGG:

A monoid is when you have an associative binary function and a value which acts as an identity with respect to that function.

Let’s see the typeclass contract for Monoid in Scalaz:

trait Monoid[A] extends Semigroup[A] { self =>

////

/** The identity element for `append`. */

def zero: A

...

}

Semigroup

Looks like Monoid extends Semigroup so let’s look at its typeclass.

trait Semigroup[A] { self =>

def append(a1: A, a2: => A): A

...

}

Here are the operators:

trait SemigroupOps[A] extends Ops[A] {

final def |+|(other: => A): A = A.append(self, other)

final def mappend(other: => A): A = A.append(self, other)

final def ⊹(other: => A): A = A.append(self, other)

}

It introduces mappend operator with symbolic alias |+| and ⊹.

LYAHFGG:

We have

mappend, which, as you’ve probably guessed, is the binary function. It takes two values of the same type and returns a value of that type as well.

LYAHFGG also warns that just because it’s named mappend it does not mean it’s appending something, like in the case of *. Let’s try using this.

scala> List(1, 2, 3) mappend List(4, 5, 6)

res23: List[Int] = List(1, 2, 3, 4, 5, 6)

scala> "one" mappend "two"

res25: String = onetwo

I think the idiomatic Scalaz way is to use |+|:

scala> List(1, 2, 3) |+| List(4, 5, 6)

res26: List[Int] = List(1, 2, 3, 4, 5, 6)

scala> "one" |+| "two"

res27: String = onetwo

This looks more concise.

Back to Monoid

trait Monoid[A] extends Semigroup[A] { self =>

////

/** The identity element for `append`. */

def zero: A

...

}

LYAHFGG:

memptyrepresents the identity value for a particular monoid.

Scalaz calls this zero instead.

scala> Monoid[List[Int]].zero

res15: List[Int] = List()

scala> Monoid[String].zero

res16: String = ""

Tags.Multiplication

LYAHFGG:

So now that there are two equally valid ways for numbers (addition and multiplication) to be monoids, which way do choose? Well, we don’t have to.

This is where Scalaz 7.1 uses tagged type. The built-in tags are Tags. There are 8 tags for Monoids and 1 named Zip for Applicative. (Is this the Zip List I couldn’t find yesterday?)

scala> Tags.Multiplication(10) |+| Monoid[Int @@ Tags.Multiplication].zero

res21: scalaz.@@[Int,scalaz.Tags.Multiplication] = 10

Nice! So we can multiply numbers using |+|. For addition, we use plain Int.

scala> 10 |+| Monoid[Int].zero

res22: Int = 10

Tags.Disjunction and Tags.Conjunction

LYAHFGG:

Another type which can act like a monoid in two distinct but equally valid ways is

Bool. The first way is to have the or function||act as the binary function along withFalseas the identity value. … The other way forBoolto be an instance ofMonoidis to kind of do the opposite: have&&be the binary function and then makeTruethe identity value.

In Scalaz 7 these are called Boolean @@ Tags.Disjunction and Boolean @@ Tags.Conjunction respectively.

scala> Tags.Disjunction(true) |+| Tags.Disjunction(false)

res28: scalaz.@@[Boolean,scalaz.Tags.Disjunction] = true

scala> Monoid[Boolean @@ Tags.Disjunction].zero |+| Tags.Disjunction(true)

res29: scalaz.@@[Boolean,scalaz.Tags.Disjunction] = true

scala> Monoid[Boolean @@ Tags.Disjunction].zero |+| Monoid[Boolean @@ Tags.Disjunction].zero

res30: scalaz.@@[Boolean,scalaz.Tags.Disjunction] = false

scala> Monoid[Boolean @@ Tags.Conjunction].zero |+| Tags.Conjunction(true)

res31: scalaz.@@[Boolean,scalaz.Tags.Conjunction] = true

scala> Monoid[Boolean @@ Tags.Conjunction].zero |+| Tags.Conjunction(false)

res32: scalaz.@@[Boolean,scalaz.Tags.Conjunction] = false

Ordering as Monoid

LYAHFGG:

With

Ordering, we have to look a bit harder to recognize a monoid, but it turns out that itsMonoidinstance is just as intuitive as the ones we’ve met so far and also quite useful.

Sounds odd, but let’s check it out.

scala> Ordering.LT |+| Ordering.GT

<console>:14: error: value |+| is not a member of object scalaz.Ordering.LT

Ordering.LT |+| Ordering.GT

^

scala> (Ordering.LT: Ordering) |+| (Ordering.GT: Ordering)

res42: scalaz.Ordering = LT

scala> (Ordering.GT: Ordering) |+| (Ordering.LT: Ordering)

res43: scalaz.Ordering = GT

scala> Monoid[Ordering].zero |+| (Ordering.LT: Ordering)

res44: scalaz.Ordering = LT

scala> Monoid[Ordering].zero |+| (Ordering.GT: Ordering)

res45: scalaz.Ordering = GT

LYAHFGG:

OK, so how is this monoid useful? Let’s say you were writing a function that takes two strings, compares their lengths, and returns an

Ordering. But if the strings are of the same length, then instead of returningEQright away, we want to compare them alphabetically.

Because the left comparison is kept unless it’s Ordering.EQ we can use this to compose two levels of comparisons. Let’s try implementing lengthCompare using Scalaz:

scala> def lengthCompare(lhs: String, rhs: String): Ordering =

(lhs.length ?|? rhs.length) |+| (lhs ?|? rhs)

lengthCompare: (lhs: String, rhs: String)scalaz.Ordering

scala> lengthCompare("zen", "ants")

res46: scalaz.Ordering = LT

scala> lengthCompare("zen", "ant")

res47: scalaz.Ordering = GT

It works. “zen” is LT compared to “ants” because it’s shorter.

We still have more Monoids, but let’s call it a day. We’ll pick it up from here later.

day 4

Yesterday we reviewed kinds and types, explored Tagged type, and started looking at Semigroup and Monoid as a way of abstracting binary operations over various types.

Also a comment from Jason Zaugg:

This might be a good point to pause and discuss the laws by which a well behaved type class instance must abide.

I’ve been skipping all the sections in Learn You a Haskell for Great Good about the laws and we got pulled over.

Functor Laws

LYAHFGG:

All functors are expected to exhibit certain kinds of functor-like properties and behaviors. … The first functor law states that if we map the id function over a functor, the functor that we get back should be the same as the original functor.

In other words,

scala> List(1, 2, 3) map {identity} assert_=== List(1, 2, 3)

The second law says that composing two functions and then mapping the resulting function over a functor should be the same as first mapping one function over the functor and then mapping the other one.

In other words,

scala> (List(1, 2, 3) map {{(_: Int) * 3} map {(_: Int) + 1}}) assert_=== (List(1, 2, 3) map {(_: Int) * 3} map {(_: Int) + 1})

These are laws the implementer of the functors must abide, and not something the compiler can check for you. Scalaz 7+ ships with FunctorLaw traits that describes this in code:

trait FunctorLaw {

/** The identity function, lifted, is a no-op. */

def identity[A](fa: F[A])(implicit FA: Equal[F[A]]): Boolean = FA.equal(map(fa)(x => x), fa)

/**

* A series of maps may be freely rewritten as a single map on a

* composed function.

*/

def associative[A, B, C](fa: F[A], f1: A => B, f2: B => C)(implicit FC: Equal[F[C]]): Boolean = FC.equal(map(map(fa)(f1))(f2), map(fa)(f2 compose f1))

}

Not only that, it ships with ScalaCheck bindings to test these properties using arbitrary values. Here’s the build.sbt to check from REPL:

scalaVersion := "2.11.2"

val scalazVersion = "7.1.0"

libraryDependencies ++= Seq(

"org.scalaz" %% "scalaz-core" % scalazVersion,

"org.scalaz" %% "scalaz-effect" % scalazVersion,

"org.scalaz" %% "scalaz-typelevel" % scalazVersion,

"org.scalaz" %% "scalaz-scalacheck-binding" % scalazVersion % "test"

)

scalacOptions += "-feature"

initialCommands in console := "import scalaz._, Scalaz._"

initialCommands in console in Test := "import scalaz._, Scalaz._, scalacheck.ScalazProperties._, scalacheck.ScalazArbitrary._,scalacheck.ScalaCheckBinding._"

Instead of the usual sbt console, run sbt test:console:

$ sbt test:console

[info] Starting scala interpreter...

[info]

import scalaz._

import Scalaz._

import scalacheck.ScalazProperties._

import scalacheck.ScalazArbitrary._

import scalacheck.ScalaCheckBinding._

Welcome to Scala version 2.10.3 (Java HotSpot(TM) 64-Bit Server VM, Java 1.6.0_45).

Type in expressions to have them evaluated.

Type :help for more information.

scala>

Here’s how you test if List meets the functor laws:

scala> functor.laws[List].check

+ functor.identity: OK, passed 100 tests.

+ functor.associative: OK, passed 100 tests.

Breaking the law

Following the book, let’s try breaking the law.

scala> :paste

// Entering paste mode (ctrl-D to finish)

sealed trait COption[+A] {}

case class CSome[A](counter: Int, a: A) extends COption[A]

case object CNone extends COption[Nothing]

implicit def coptionEqual[A]: Equal[COption[A]] = Equal.equalA

implicit val coptionFunctor = new Functor[COption] {

def map[A, B](fa: COption[A])(f: A => B): COption[B] = fa match {

case CNone => CNone

case CSome(c, a) => CSome(c + 1, f(a))

}

}

// Exiting paste mode, now interpreting.

defined trait COption

defined class CSome

defined module CNone

coptionEqual: [A]=> scalaz.Equal[COption[A]]

coptionFunctor: scalaz.Functor[COption] = $anon$1@42538425

scala> (CSome(0, "ho"): COption[String]) map {(_: String) + "ha"}

res4: COption[String] = CSome(1,hoha)

scala> (CSome(0, "ho"): COption[String]) map {identity}

res5: COption[String] = CSome(1,ho)

It’s breaking the first law. Let’s see if we can catch this.

scala> functor.laws[COption].check

<console>:26: error: could not find implicit value for parameter af: org.scalacheck.Arbitrary[COption[Int]]

functor.laws[COption].check

^

So now we have to supply “arbitrary” COption[A] implicitly:

scala> import org.scalacheck.{Gen, Arbitrary}

import org.scalacheck.{Gen, Arbitrary}

scala> implicit def COptionArbiterary[A](implicit a: Arbitrary[A]): Arbitrary[COption[A]] =

a map { a => (CSome(0, a): COption[A]) }

COptionArbiterary: [A](implicit a: org.scalacheck.Arbitrary[A])org.scalacheck.Arbitrary[COption[A]]

This is pretty cool. ScalaCheck on its own does not ship map method, but Scalaz injected it as a Functor[Arbitrary]! Not much of an arbitrary COption, but I don’t know enough ScalaCheck, so this will have to do.

scala> functor.laws[COption].check

! functor.identity: Falsified after 0 passed tests.

> ARG_0: CSome(0,-170856004)

! functor.associative: Falsified after 0 passed tests.

> ARG_0: CSome(0,1)

> ARG_1: <function1>

> ARG_2: <function1>

And the test fails as expected.

Applicative Laws

Here are the laws for Applicative:

trait ApplicativeLaw extends FunctorLaw {

def identityAp[A](fa: F[A])(implicit FA: Equal[F[A]]): Boolean =

FA.equal(ap(fa)(point((a: A) => a)), fa)

def composition[A, B, C](fbc: F[B => C], fab: F[A => B], fa: F[A])(implicit FC: Equal[F[C]]) =

FC.equal(ap(ap(fa)(fab))(fbc), ap(fa)(ap(fab)(ap(fbc)(point((bc: B => C) => (ab: A => B) => bc compose ab)))))

def homomorphism[A, B](ab: A => B, a: A)(implicit FB: Equal[F[B]]): Boolean =

FB.equal(ap(point(a))(point(ab)), point(ab(a)))

def interchange[A, B](f: F[A => B], a: A)(implicit FB: Equal[F[B]]): Boolean =

FB.equal(ap(point(a))(f), ap(f)(point((f: A => B) => f(a))))

}

LYAHFGG is skipping the details on this, so I am skipping too.

Semigroup Laws

Here are the Semigroup Laws:

/**

* A semigroup in type F must satisfy two laws:

*

* - '''closure''': `∀ a, b in F, append(a, b)` is also in `F`. This is enforced by the type system.

* - '''associativity''': `∀ a, b, c` in `F`, the equation `append(append(a, b), c) = append(a, append(b , c))` holds.

*/

trait SemigroupLaw {

def associative(f1: F, f2: F, f3: F)(implicit F: Equal[F]): Boolean =

F.equal(append(f1, append(f2, f3)), append(append(f1, f2), f3))

}

Remember, 1 * (2 * 3) and (1 * 2) * 3 must hold, which is called associative.

scala> semigroup.laws[Int @@ Tags.Multiplication].check

+ semigroup.associative: OK, passed 100 tests.

Monoid Laws

Here are the Monoid Laws:

/**

* Monoid instances must satisfy [[scalaz.Semigroup.SemigroupLaw]] and 2 additional laws:

*

* - '''left identity''': `forall a. append(zero, a) == a`

* - '''right identity''' : `forall a. append(a, zero) == a`

*/

trait MonoidLaw extends SemigroupLaw {

def leftIdentity(a: F)(implicit F: Equal[F]) = F.equal(a, append(zero, a))

def rightIdentity(a: F)(implicit F: Equal[F]) = F.equal(a, append(a, zero))

}

This law is simple. I can |+| (mappend) identity value to either left hand side or right hand side. For multiplication:

scala> 1 * 2 assert_=== 2

scala> 2 * 1 assert_=== 2

Using Scalaz:

scala> (Monoid[Int @@ Tags.Multiplication].zero |+| Tags.Multiplication(2): Int) assert_=== 2

scala> (Tags.Multiplication(2) |+| Monoid[Int @@ Tags.Multiplication].zero: Int) assert_=== 2

scala> monoid.laws[Int @@ Tags.Multiplication].check

+ monoid.semigroup.associative: OK, passed 100 tests.

+ monoid.left identity: OK, passed 100 tests.

+ monoid.right identity: OK, passed 100 tests.

Option as Monoid

LYAHFGG:

One way is to treat

Maybe aas a monoid only if its type parameter a is a monoid as well and then implement mappend in such a way that it uses the mappend operation of the values that are wrapped withJust.

Let’s see if this is how Scalaz does it. See std/Option.scala:

implicit def optionMonoid[A: Semigroup]: Monoid[Option[A]] = new Monoid[Option[A]] {

def append(f1: Option[A], f2: => Option[A]) = (f1, f2) match {

case (Some(a1), Some(a2)) => Some(Semigroup[A].append(a1, a2))

case (Some(a1), None) => f1

case (None, Some(a2)) => f2

case (None, None) => None

}

def zero: Option[A] = None

}

The implementation is nice and simple. Context bound A: Semigroup says that A must support |+|. The rest is pattern matching. Doing exactly what the book says.

scala> (none: Option[String]) |+| "andy".some

res23: Option[String] = Some(andy)

scala> (Ordering.LT: Ordering).some |+| none

res25: Option[scalaz.Ordering] = Some(LT)

It works.

LYAHFGG:

But if we don’t know if the contents are monoids, we can’t use

mappendbetween them, so what are we to do? Well, one thing we can do is to just discard the second value and keep the first one. For this, theFirst atype exists.

Haskell is using newtype to implement First type constructor. Scalaz 7 does it using mightly Tagged type:

scala> Tags.First('a'.some) |+| Tags.First('b'.some)

res26: scalaz.@@[Option[Char],scalaz.Tags.First] = Some(a)

scala> Tags.First(none: Option[Char]) |+| Tags.First('b'.some)

res27: scalaz.@@[Option[Char],scalaz.Tags.First] = Some(b)

scala> Tags.First('a'.some) |+| Tags.First(none: Option[Char])

res28: scalaz.@@[Option[Char],scalaz.Tags.First] = Some(a)

LYAHFGG:

If we want a monoid on

Maybe asuch that the second parameter is kept if both parameters ofmappendareJustvalues,Data.Monoidprovides a theLast atype.

This is Tags.Last:

scala> Tags.Last('a'.some) |+| Tags.Last('b'.some)

res29: scalaz.@@[Option[Char],scalaz.Tags.Last] = Some(b)

scala> Tags.Last(none: Option[Char]) |+| Tags.Last('b'.some)

res30: scalaz.@@[Option[Char],scalaz.Tags.Last] = Some(b)

scala> Tags.Last('a'.some) |+| Tags.Last(none: Option[Char])

res31: scalaz.@@[Option[Char],scalaz.Tags.Last] = Some(a)

Foldable

LYAHFGG:

Because there are so many data structures that work nicely with folds, the

Foldabletype class was introduced. Much likeFunctoris for things that can be mapped over, Foldable is for things that can be folded up!

The equivalent in Scalaz is also called Foldable. Let’s see the typeclass contract:

trait Foldable[F[_]] { self =>

/** Map each element of the structure to a [[scalaz.Monoid]], and combine the results. */

def foldMap[A,B](fa: F[A])(f: A => B)(implicit F: Monoid[B]): B

/**Right-associative fold of a structure. */

def foldRight[A, B](fa: F[A], z: => B)(f: (A, => B) => B): B

...

}

Here are the operators:

/** Wraps a value `self` and provides methods related to `Foldable` */

trait FoldableOps[F[_],A] extends Ops[F[A]] {

implicit def F: Foldable[F]

////

final def foldMap[B: Monoid](f: A => B = (a: A) => a): B = F.foldMap(self)(f)

final def foldRight[B](z: => B)(f: (A, => B) => B): B = F.foldRight(self, z)(f)

final def foldLeft[B](z: B)(f: (B, A) => B): B = F.foldLeft(self, z)(f)

final def foldRightM[G[_], B](z: => B)(f: (A, => B) => G[B])(implicit M: Monad[G]): G[B] = F.foldRightM(self, z)(f)

final def foldLeftM[G[_], B](z: B)(f: (B, A) => G[B])(implicit M: Monad[G]): G[B] = F.foldLeftM(self, z)(f)

final def foldr[B](z: => B)(f: A => (=> B) => B): B = F.foldr(self, z)(f)

final def foldl[B](z: B)(f: B => A => B): B = F.foldl(self, z)(f)

final def foldrM[G[_], B](z: => B)(f: A => ( => B) => G[B])(implicit M: Monad[G]): G[B] = F.foldrM(self, z)(f)

final def foldlM[G[_], B](z: B)(f: B => A => G[B])(implicit M: Monad[G]): G[B] = F.foldlM(self, z)(f)

final def foldr1(f: (A, => A) => A): Option[A] = F.foldr1(self)(f)

final def foldl1(f: (A, A) => A): Option[A] = F.foldl1(self)(f)

final def sumr(implicit A: Monoid[A]): A = F.foldRight(self, A.zero)(A.append)

final def suml(implicit A: Monoid[A]): A = F.foldLeft(self, A.zero)(A.append(_, _))

final def toList: List[A] = F.toList(self)

final def toIndexedSeq: IndexedSeq[A] = F.toIndexedSeq(self)

final def toSet: Set[A] = F.toSet(self)

final def toStream: Stream[A] = F.toStream(self)

final def all(p: A => Boolean): Boolean = F.all(self)(p)

final def ∀(p: A => Boolean): Boolean = F.all(self)(p)

final def allM[G[_]: Monad](p: A => G[Boolean]): G[Boolean] = F.allM(self)(p)

final def anyM[G[_]: Monad](p: A => G[Boolean]): G[Boolean] = F.anyM(self)(p)

final def any(p: A => Boolean): Boolean = F.any(self)(p)

final def ∃(p: A => Boolean): Boolean = F.any(self)(p)

final def count: Int = F.count(self)

final def maximum(implicit A: Order[A]): Option[A] = F.maximum(self)

final def minimum(implicit A: Order[A]): Option[A] = F.minimum(self)

final def longDigits(implicit d: A <:< Digit): Long = F.longDigits(self)

final def empty: Boolean = F.empty(self)

final def element(a: A)(implicit A: Equal[A]): Boolean = F.element(self, a)

final def splitWith(p: A => Boolean): List[List[A]] = F.splitWith(self)(p)

final def selectSplit(p: A => Boolean): List[List[A]] = F.selectSplit(self)(p)

final def collapse[X[_]](implicit A: ApplicativePlus[X]): X[A] = F.collapse(self)

final def concatenate(implicit A: Monoid[A]): A = F.fold(self)

final def traverse_[M[_]:Applicative](f: A => M[Unit]): M[Unit] = F.traverse_(self)(f)

////

}

That was impressive. Looks almost like the collection libraries, except it’s taking advantage of typeclasses like Order. Let’s try folding:

scala> List(1, 2, 3).foldRight (1) {_ * _}

res49: Int = 6

scala> 9.some.foldLeft(2) {_ + _}

res50: Int = 11

These are already in the standard library. Let’s try the foldMap operator. Monoid[A] gives us zero and |+|, so that’s enough information to fold things over. Since we can’t assume that Foldable contains a monoid we need a function to change from A => B where [B: Monoid]:

scala> List(1, 2, 3) foldMap {identity}

res53: Int = 6

scala> List(true, false, true, true) foldMap {Tags.Disjunction.apply}

res56: scalaz.@@[Boolean,scalaz.Tags.Disjunction] = true

This surely beats writing Tags.Disjunction(true) for each of them and connecting them with |+|.

We will pick it up from here later. I’ll be out on a business trip, it might slow down.

day 5

On day 4 we reviewed typeclass laws like Functor laws and used ScalaCheck to validate on arbitrary examples of a typeclass. We also looked at three different ways of using Option as Monoid, and looked at Foldable that can foldMap etc.

A fist full of Monads

We get to start a new chapter today on Learn You a Haskell for Great Good.

Monads are a natural extension applicative functors, and they provide a solution to the following problem: If we have a value with context,

m a, how do we apply it to a function that takes a normalaand returns a value with a context.

The equivalent is called Monad in Scalaz. Here’s the typeclass contract:

trait Monad[F[_]] extends Applicative[F] with Bind[F] { self =>

////

}

It extends Applicative and Bind. So let’s look at Bind.

Bind

Here’s Bind’s contract:

trait Bind[F[_]] extends Apply[F] { self =>

/** Equivalent to `join(map(fa)(f))`. */

def bind[A, B](fa: F[A])(f: A => F[B]): F[B]

}

And here are the operators:

/** Wraps a value `self` and provides methods related to `Bind` */

trait BindOps[F[_],A] extends Ops[F[A]] {

implicit def F: Bind[F]

////

import Liskov.<~<

def flatMap[B](f: A => F[B]) = F.bind(self)(f)

def >>=[B](f: A => F[B]) = F.bind(self)(f)

def ∗[B](f: A => F[B]) = F.bind(self)(f)

def join[B](implicit ev: A <~< F[B]): F[B] = F.bind(self)(ev(_))

def μ[B](implicit ev: A <~< F[B]): F[B] = F.bind(self)(ev(_))

def >>[B](b: F[B]): F[B] = F.bind(self)(_ => b)

def ifM[B](ifTrue: => F[B], ifFalse: => F[B])(implicit ev: A <~< Boolean): F[B] = {

val value: F[Boolean] = Liskov.co[F, A, Boolean](ev)(self)

F.ifM(value, ifTrue, ifFalse)

}

////

}

It introduces flatMap operator and its symbolic aliases >>= and ∗. We’ll worry about the other operators later. We are use to flapMap from the standard library:

scala> 3.some flatMap { x => (x + 1).some }

res2: Option[Int] = Some(4)

scala> (none: Option[Int]) flatMap { x => (x + 1).some }

res3: Option[Int] = None

Monad

Back to Monad:

trait Monad[F[_]] extends Applicative[F] with Bind[F] { self =>

////

}

Unlike Haskell, Monad[F[_]] exntends Applicative[F[_]] so there’s no return vs pure issues. They both use point.

scala> Monad[Option].point("WHAT")

res5: Option[String] = Some(WHAT)

scala> 9.some flatMap { x => Monad[Option].point(x * 10) }

res6: Option[Int] = Some(90)

scala> (none: Option[Int]) flatMap { x => Monad[Option].point(x * 10) }

res7: Option[Int] = None

Walk the line

LYAHFGG:

Let’s say that [Pierre] keeps his balance if the number of birds on the left side of the pole and on the right side of the pole is within three. So if there’s one bird on the right side and four birds on the left side, he’s okay. But if a fifth bird lands on the left side, then he loses his balance and takes a dive.

Now let’s try implementing Pole example from the book.

scala> type Birds = Int

defined type alias Birds

scala> case class Pole(left: Birds, right: Birds)

defined class Pole

I don’t think it’s common to alias Int like this in Scala, but we’ll go with the flow. I am going to turn Pole into a case class so I can implement landLeft and landRight as methods:

scala> case class Pole(left: Birds, right: Birds) {

def landLeft(n: Birds): Pole = copy(left = left + n)

def landRight(n: Birds): Pole = copy(right = right + n)

}

defined class Pole

I think it looks better with some OO:

scala> Pole(0, 0).landLeft(2)

res10: Pole = Pole(2,0)

scala> Pole(1, 2).landRight(1)

res11: Pole = Pole(1,3)

scala> Pole(1, 2).landRight(-1)

res12: Pole = Pole(1,1)

We can chain these too:

scala> Pole(0, 0).landLeft(1).landRight(1).landLeft(2)

res13: Pole = Pole(3,1)

scala> Pole(0, 0).landLeft(1).landRight(4).landLeft(-1).landRight(-2)

res15: Pole = Pole(0,2)

As the book says, an intermediate value have failed but the calculation kept going. Now let’s introduce failures as Option[Pole]:

scala> case class Pole(left: Birds, right: Birds) {

def landLeft(n: Birds): Option[Pole] =

if (math.abs((left + n) - right) < 4) copy(left = left + n).some

else none

def landRight(n: Birds): Option[Pole] =

if (math.abs(left - (right + n)) < 4) copy(right = right + n).some

else none

}

defined class Pole

scala> Pole(0, 0).landLeft(2)

res16: Option[Pole] = Some(Pole(2,0))

scala> Pole(0, 3).landLeft(10)

res17: Option[Pole] = None

Let’s try the chaining using flatMap:

scala> Pole(0, 0).landRight(1) flatMap {_.landLeft(2)}

res18: Option[Pole] = Some(Pole(2,1))

scala> (none: Option[Pole]) flatMap {_.landLeft(2)}

res19: Option[Pole] = None

scala> Monad[Option].point(Pole(0, 0)) flatMap {_.landRight(2)} flatMap {_.landLeft(2)} flatMap {_.landRight(2)}

res21: Option[Pole] = Some(Pole(2,4))

Note the use of Monad[Option].point(...) here to start the initial value in Option context. We can also try the >>= alias to make it look more monadic:

scala> Monad[Option].point(Pole(0, 0)) >>= {_.landRight(2)} >>= {_.landLeft(2)} >>= {_.landRight(2)}

res22: Option[Pole] = Some(Pole(2,4))

Let’s see if monadic chaining simulates the pole balancing better:

scala> Monad[Option].point(Pole(0, 0)) >>= {_.landLeft(1)} >>= {_.landRight(4)} >>= {_.landLeft(-1)} >>= {_.landRight(-2)}

res23: Option[Pole] = None

It works.

Banana on wire

LYAHFGG:

We may also devise a function that ignores the current number of birds on the balancing pole and just makes Pierre slip and fall. We can call it

banana.

Here’s the banana that always fails:

scala> case class Pole(left: Birds, right: Birds) {

def landLeft(n: Birds): Option[Pole] =

if (math.abs((left + n) - right) < 4) copy(left = left + n).some

else none

def landRight(n: Birds): Option[Pole] =

if (math.abs(left - (right + n)) < 4) copy(right = right + n).some

else none

def banana: Option[Pole] = none

}

defined class Pole

scala> Monad[Option].point(Pole(0, 0)) >>= {_.landLeft(1)} >>= {_.banana} >>= {_.landRight(1)}

res24: Option[Pole] = None

LYAHFGG:

Instead of making functions that ignore their input and just return a predetermined monadic value, we can use the

>>function.

Here’s how >> behaves with Option:

scala> (none: Option[Int]) >> 3.some

res25: Option[Int] = None

scala> 3.some >> 4.some

res26: Option[Int] = Some(4)

scala> 3.some >> (none: Option[Int])

res27: Option[Int] = None

Let’s try replacing banana with >> (none: Option[Pole]):

scala> Monad[Option].point(Pole(0, 0)) >>= {_.landLeft(1)} >> (none: Option[Pole]) >>= {_.landRight(1)}

<console>:26: error: missing parameter type for expanded function ((x$1) => x$1.landLeft(1))

Monad[Option].point(Pole(0, 0)) >>= {_.landLeft(1)} >> (none: Option[Pole]) >>= {_.landRight(1)}

^

The type inference broke down all the sudden. The problem is likely the operator precedence. Programming in Scala says:

The one exception to the precedence rule, alluded to above, concerns assignment operators, which end in an equals character. If an operator ends in an equals character (

=), and the operator is not one of the comparison operators<=,>=,==, or!=, then the precedence of the operator is the same as that of simple assignment (=). That is, it is lower than the precedence of any other operator.

Note: The above description is incomplete. Another exception from the assignment operator rule is if it starts with (=) like ===.

Because >>= (bind) ends in equals character, its precedence is the lowest, which forces ({_.landLeft(1)} >> (none: Option[Pole])) to evaluate first. There are a few unpalatable work arounds. First we can use dot-and-parens like normal method calls:

scala> Monad[Option].point(Pole(0, 0)).>>=({_.landLeft(1)}).>>(none: Option[Pole]).>>=({_.landRight(1)})

res9: Option[Pole] = None

Or recognize the precedence issue and place parens around just the right place:

scala> (Monad[Option].point(Pole(0, 0)) >>= {_.landLeft(1)}) >> (none: Option[Pole]) >>= {_.landRight(1)}

res10: Option[Pole] = None

Both yield the right result. By the way, changing >>= to flatMap is not going to help since >> still has higher precedence.

for syntax

LYAHFGG:

Monads in Haskell are so useful that they got their own special syntax called

donotation.

First, let write the nested lambda:

scala> 3.some >>= { x => "!".some >>= { y => (x.shows + y).some } }

res14: Option[String] = Some(3!)

By using >>=, any part of the calculation can fail:

scala> 3.some >>= { x => (none: Option[String]) >>= { y => (x.shows + y).some } }

res17: Option[String] = None

scala> (none: Option[Int]) >>= { x => "!".some >>= { y => (x.shows + y).some } }

res16: Option[String] = None

scala> 3.some >>= { x => "!".some >>= { y => (none: Option[String]) } }

res18: Option[String] = None

Instead of the do notation in Haskell, Scala has for syntax, which does the same thing:

scala> for {

x <- 3.some

y <- "!".some

} yield (x.shows + y)

res19: Option[String] = Some(3!)

LYAHFGG:

In a

doexpression, every line that isn’t aletline is a monadic value.

I think this applies true for Scala’s for syntax too.

Pierre returns

LYAHFGG:

Our tightwalker’s routine can also be expressed with

donotation.

scala> def routine: Option[Pole] =

for {

start <- Monad[Option].point(Pole(0, 0))

first <- start.landLeft(2)

second <- first.landRight(2)

third <- second.landLeft(1)

} yield third

routine: Option[Pole]

scala> routine

res20: Option[Pole] = Some(Pole(3,2))

We had to extract third since yield expects Pole not Option[Pole].

LYAHFGG:

If we want to throw the Pierre a banana peel in

donotation, we can do the following:

scala> def routine: Option[Pole] =

for {

start <- Monad[Option].point(Pole(0, 0))

first <- start.landLeft(2)

_ <- (none: Option[Pole])

second <- first.landRight(2)

third <- second.landLeft(1)

} yield third

routine: Option[Pole]

scala> routine

res23: Option[Pole] = None

Pattern matching and failure

LYAHFGG:

In